Article: Beyond the Feature Matrix: Evaluating Generative Tools by Workflow Velocity

Beyond the Feature Matrix: Evaluating Generative Tools by Workflow Velocity

If you spend enough time looking at the landing pages for generative media platforms, a sense of "feature blindness" begins to set in. Every tool promises a background remover, an upscaler, and a text-to-image generator. On paper, most modern creative suites look identical. Their comparison tables are filled with green checkmarks that suggest parity, yet anyone who has actually tried to move a project from a rough concept to a client-ready asset knows that these checklists are deceptive.

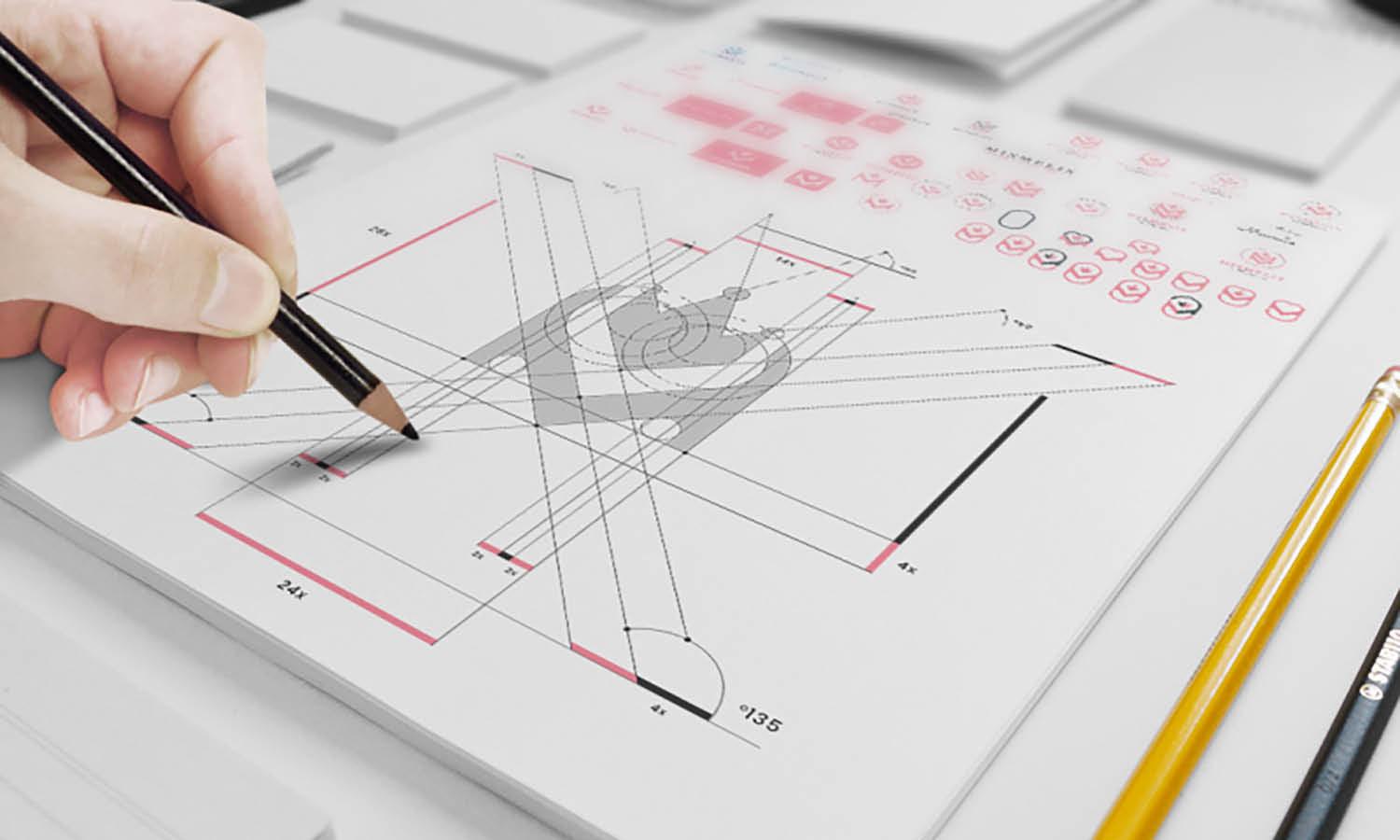

The reality of creative production is not found in a list of features, but in the friction between them. A tool might have an impressive "object removal" feature, but if the underlying model fails to understand global lighting, the user spends twenty minutes manually color-correcting the patch. In a high-volume marketing or content environment, the only metric that truly matters is "workflow velocity"—the speed at which you can bridge the gap from raw prompt to polished output without leaving your primary environment.

The Illusion of Feature Parity in Generative Media

Most software evaluations are still stuck in a legacy mindset. We compare generative tools the way we once compared spreadsheet software: counting buttons and measuring file compatibility. However, generative AI is non-deterministic. Two tools can both offer a "background remover," but one might use a legacy segmentation model that struggles with fine hair, while another uses a modern latent-aware model that understands depth and transparency.

The hidden cost of modern AI creation is "tool hopping." It is common for creators to generate an image in one specialized platform, move to another for upscaling, jump to a third for a face swap, and then finally land in a legacy editor for finishing touches. Each jump is a point of friction that increases cognitive load and introduces the risk of data loss or stylistic drift. When we evaluate a tool, the question shouldn't be "Does it have an upscaler?" but rather "How many manual steps are required to make the upscale look natural?"

When we reduce our evaluation to a feature matrix, we ignore the intelligence of the underlying models. A platform that offers access to multiple foundational models—such as Flux or Nano Banana—is fundamentally more valuable than one locked into a single proprietary model, even if the feature lists look identical. Model variety allows the creator to match the engine to the specific intent of the project, whether that is high-fidelity realism or stylistic abstraction.

Image Source: https://piceditor.ai/

Beyond the Feature Matrix: Evaluating Generative Tools by Workflow Velocity

If you spend enough time looking at the landing pages for generative media platforms, a sense of "feature blindness" begins to set in. Every tool promises a background remover, an upscaler, and a text-to-image generator. On paper, most modern creative suites look identical. Their comparison tables are filled with green checkmarks that suggest parity, yet anyone who has actually tried to move a project from a rough concept to a client-ready asset knows that these checklists are deceptive.

The reality of creative production is not found in a list of features, but in the friction between them. A tool might have an impressive "object removal" feature, but if the underlying model fails to understand global lighting, the user spends twenty minutes manually color-correcting the patch. In a high-volume marketing or content environment, the only metric that truly matters is "workflow velocity"—the speed at which you can bridge the gap from raw prompt to polished output without leaving your primary environment.

The Illusion of Feature Parity in Generative Media

Most software evaluations are still stuck in a legacy mindset. We compare generative tools the way we once compared spreadsheet software: counting buttons and measuring file compatibility. However, generative AI is non-deterministic. Two tools can both offer a "background remover," but one might use a legacy segmentation model that struggles with fine hair, while another uses a modern latent-aware model that understands depth and transparency.

The hidden cost of modern AI creation is "tool hopping." It is common for creators to generate an image in one specialized platform, move to another for upscaling, jump to a third for a face swap, and then finally land in a legacy editor for finishing touches. Each jump is a point of friction that increases cognitive load and introduces the risk of data loss or stylistic drift. When we evaluate a tool, the question shouldn't be "Does it have an upscaler?" but rather "How many manual steps are required to make the upscale look natural?"

When we reduce our evaluation to a feature matrix, we ignore the intelligence of the underlying models. A platform that offers access to multiple foundational models—such as Flux or Nano Banana—is fundamentally more valuable than one locked into a single proprietary model, even if the feature lists look identical. Model variety allows the creator to match the engine to the specific intent of the project, whether that is high-fidelity realism or stylistic abstraction.

Measuring Prompt Fidelity and Creative Intent

A significant challenge in evaluating generative tools is that "quality" is subjective. However, prompt fidelity—the degree to which a model adheres to complex, nuanced instructions—is measurable. When testing a platform, we should look past the "pretty" images in the marketing gallery. Most models can generate a generic sunset; few can accurately render a "submerged ethereal dream in a Victorian room" where the refraction of light on the fabric of a gown matches the density of the water.

This is where the choice of model becomes critical. For instance, the Flux model has gained traction specifically because of its spatial reasoning and ability to handle text within images—areas where older models frequently hallucinated. If you are building a production pipeline, you need to know if the AI Image Editor you are using allows you to switch between these weights.

The distinction between a tool that generates a "good image" and one that generates the "right image" often comes down to control. Prompting is only the first 80% of the work. The final 20% requires granular adjustments. If the editor doesn't understand the context of the original generation, you’re back to square one. We have to acknowledge an uncomfortable truth: even the best models currently have a significant margin of error when it comes to specific human anatomy or complex mechanical parts. Evaluation must account for how the tool handles these inevitable failures.

The Efficiency of the Integrated AI Image Editor

The most common point of failure in a creative workflow is the "export-import" cycle. When a creator generates an image and notices a small hallucination—perhaps an extra finger or a stray shadow—the speed at which they can fix it determines their daily output. This is why an integrated AI Image Editor is becoming a requirement rather than a luxury.

In an integrated environment, the editor and the generator share the same "context." When you use an object eraser or a face swap feature within a unified platform, the AI isn't just painting pixels; it is working within the latent space of the original image. This leads to far more cohesive results than taking that same image into a separate, non-AI-aware photo editor.

Consider the task of creating a consistent set of social media assets. If you can generate a base image, perform a face swap to align with a specific brand persona, and then use an AI Image Editor to expand the canvas (outpainting) for different aspect ratios—all in one tab—you have essentially collapsed a four-hour workflow into fifteen minutes. This velocity is the true competitive advantage for agencies and indie creators alike.

Image Source: https://piceditor.ai/

Testing for Production Realism and Edge Cases

To truly evaluate a tool, you must move beyond the "happy path" of simple prompts. Professional creators should develop a set of "stress tests" designed to break the model. This is where you see the difference between a consumer gimmick and a production tool.

A reliable stress test might involve:

- Text Overlays: Asking the model to render specific words in a specific font style to check for character coherence.

- Complex Lighting: Placing a light source in an unusual position (e.g., glowing shoes) to see if the shadows on the environment respond logically.

- Anatomical Interaction: Prompting for subjects holding objects or interacting with other people, which remains a primary failure point for many generative engines.

We must also be realistic about upscale quality. Many tools claim to upscale to 4K, but they often do so by "hallucinating" digital noise that looks like detail from a distance but falls apart upon close inspection. A true production-ready tool actually recovers edges and textures rather than just smoothing them over. It is vital to set an expectation of a 10-20% failure rate; no matter how advanced the AI Photo Editor is, there will be outputs that simply cannot be saved. Recognizing this early prevents "sunk cost" time-wasting on a bad seed.

Consolidating the Stack with an All-in-One AI Photo Editor

As the market matures, we are seeing a shift away from "point solutions" toward comprehensive ecosystems. Platforms like PicEditor AI are focusing on this consolidation by bridging the gap between static imagery and video. In a traditional workflow, animating a static image requires a separate subscription, a different UI, and a new set of prompting logic.

By using an all-in-one AI Photo Editor, creators can maintain visual consistency across different media formats. For example, you can generate a high-fidelity image using a model like Seedream or Flux, refine it with integrated editing tools, and then immediately pass it to a video engine like Kling or Veo to animate it. This "one-click" transition is not just about convenience; it’s about maintaining the integrity of the creative vision throughout the entire lifecycle of the asset.

The current landscape of the AI Photo Editor is rapidly evolving. We are moving toward a future where the distinction between "editing" and "generating" disappears entirely. In this environment, the most versatile tools are those that allow for "image-to-image" and "image-to-video" workflows without requiring the user to become a prompt engineer or a technical director. The goal is to spend more time on the "what" and less time on the "how."

The Verdict: Velocity as the Ultimate Metric

When choosing your creative stack, resist the urge to over-index on niche features that you might use once a month. Instead, look at the tasks you perform fifty times a day. How many clicks does it take to get a background removed? How quickly can you swap a face for a client mock-up? Does the platform give you access to the latest models, or are you stuck with a proprietary engine that is already six months out of date?

The generative media landscape is too volatile for anyone to claim absolute certainty about which tool will be the leader next year. However, we can be certain that workflow friction is the enemy of creativity. By prioritizing tools that offer integrated generation and editing—combining the power of a modern AI Image Editor with the versatility of a multi-model AI Photo Editor—you insulate yourself against the inefficiencies of a fragmented pipeline.

The ultimate metric is velocity. If a tool allows you to iterate three times in the time it used to take to iterate once, that tool is not just a utility—it is a force multiplier for your creative output. Evaluate your tools by the time they save you, not the features they list.

Measuring Prompt Fidelity and Creative Intent

A significant challenge in evaluating generative tools is that "quality" is subjective. However, prompt fidelity—the degree to which a model adheres to complex, nuanced instructions—is measurable. When testing a platform, we should look past the "pretty" images in the marketing gallery. Most models can generate a generic sunset; few can accurately render a "submerged ethereal dream in a Victorian room" where the refraction of light on the fabric of a gown matches the density of the water.

This is where the choice of model becomes critical. For instance, the Flux model has gained traction specifically because of its spatial reasoning and ability to handle text within images—areas where older models frequently hallucinated. If you are building a production pipeline, you need to know if the AI Image Editor you are using allows you to switch between these weights.

The distinction between a tool that generates a "good image" and one that generates the "right image" often comes down to control. Prompting is only the first 80% of the work. The final 20% requires granular adjustments. If the editor doesn't understand the context of the original generation, you’re back to square one. We have to acknowledge an uncomfortable truth: even the best models currently have a significant margin of error when it comes to specific human anatomy or complex mechanical parts. Evaluation must account for how the tool handles these inevitable failures.

The Efficiency of the Integrated AI Image Editor

The most common point of failure in a creative workflow is the "export-import" cycle. When a creator generates an image and notices a small hallucination—perhaps an extra finger or a stray shadow—the speed at which they can fix it determines their daily output. This is why an integrated AI Image Editor is becoming a requirement rather than a luxury.

In an integrated environment, the editor and the generator share the same "context." When you use an object eraser or a face swap feature within a unified platform, the AI isn't just painting pixels; it is working within the latent space of the original image. This leads to far more cohesive results than taking that same image into a separate, non-AI-aware photo editor.

Consider the task of creating a consistent set of social media assets. If you can generate a base image, perform a face swap to align with a specific brand persona, and then use an AI Image Editor to expand the canvas (outpainting) for different aspect ratios—all in one tab—you have essentially collapsed a four-hour workflow into fifteen minutes. This velocity is the true competitive advantage for agencies and indie creators alike.

Testing for Production Realism and Edge Cases

To truly evaluate a tool, you must move beyond the "happy path" of simple prompts. Professional creators should develop a set of "stress tests" designed to break the model. This is where you see the difference between a consumer gimmick and a production tool.

A reliable stress test might involve:

- Text Overlays: Asking the model to render specific words in a specific font style to check for character coherence.

- Complex Lighting: Placing a light source in an unusual position (e.g., glowing shoes) to see if the shadows on the environment respond logically.

- Anatomical Interaction: Prompting for subjects holding objects or interacting with other people, which remains a primary failure point for many generative engines.

We must also be realistic about upscale quality. Many tools claim to upscale to 4K, but they often do so by "hallucinating" digital noise that looks like detail from a distance but falls apart upon close inspection. A true production-ready tool actually recovers edges and textures rather than just smoothing them over. It is vital to set an expectation of a 10-20% failure rate; no matter how advanced the AI Photo Editor is, there will be outputs that simply cannot be saved. Recognizing this early prevents "sunk cost" time-wasting on a bad seed.

Consolidating the Stack with an All-in-One AI Photo Editor

As the market matures, we are seeing a shift away from "point solutions" toward comprehensive ecosystems. Platforms like PicEditor AI are focusing on this consolidation by bridging the gap between static imagery and video. In a traditional workflow, animating a static image requires a separate subscription, a different UI, and a new set of prompting logic.

By using an all-in-one AI Photo Editor, creators can maintain visual consistency across different media formats. For example, you can generate a high-fidelity image using a model like Seedream or Flux, refine it with integrated editing tools, and then immediately pass it to a video engine like Kling or Veo to animate it. This "one-click" transition is not just about convenience; it’s about maintaining the integrity of the creative vision throughout the entire lifecycle of the asset.

The current landscape of the AI Photo Editor is rapidly evolving. We are moving toward a future where the distinction between "editing" and "generating" disappears entirely. In this environment, the most versatile tools are those that allow for "image-to-image" and "image-to-video" workflows without requiring the user to become a prompt engineer or a technical director. The goal is to spend more time on the "what" and less time on the "how."

The Verdict: Velocity as the Ultimate Metric

When choosing your creative stack, resist the urge to over-index on niche features that you might use once a month. Instead, look at the tasks you perform fifty times a day. How many clicks does it take to get a background removed? How quickly can you swap a face for a client mock-up? Does the platform give you access to the latest models, or are you stuck with a proprietary engine that is already six months out of date?

The generative media landscape is too volatile for anyone to claim absolute certainty about which tool will be the leader next year. However, we can be certain that workflow friction is the enemy of creativity. By prioritizing tools that offer integrated generation and editing—combining the power of a modern AI Image Editor with the versatility of a multi-model AI Photo Editor—you insulate yourself against the inefficiencies of a fragmented pipeline.

The ultimate metric is velocity. If a tool allows you to iterate three times in the time it used to take to iterate once, that tool is not just a utility—it is a force multiplier for your creative output. Evaluate your tools by the time they save you, not the features they list.